Artifacts

noun. /ahr-tuh-fakt/

An object remaining from a particular time or event.

Evidence of a system’s behavior that persists after the intent has faded.

Most industry writing focuses on how things are supposed to work. These files examine how they actually break. They are not tied to a calendar, because the tension between Capital and Capacity has no expiration date.

The Long Tail of Poorly Installed Passive Infrastructure

Why “working today” is not the same as being lifecycle-ready

There is a quiet assumption underneath many infrastructure investment cases:

Once the passive infrastructure is in the ground, the hardest and most expensive part of the job is behind us.

For fiber networks, that assumption is understandable. Initial deployment is where the largest capital burden sits: trenching, boring, permitting, labor, restoration, materials, access coordination, and field execution. Once the fiber is placed, the business case often assumes the passive layer will remain useful for decades.

The active equipment can be refreshed later. Electronics can be upgraded. Capacity can be expanded. Faster speeds can be offered as technology improves.

That is the beauty of fiber economics:

Pay the big bill once.

Preserve the passive layer.

Upgrade around it over time.

But that model only works if the passive infrastructure was installed properly in the first place.

Infrastructure should not be accepted merely because it works on the day of handoff. It should be accepted because it can support the lifecycle economics, maintainability, resilience, and expansion assumptions built into the investment case.

This matters because there is already a hefty bill being paid for this layer. The passive network is not a disposable component. It is the most capital-intensive, hardest-to-replace, longest-life part of the system. It is the foundation upon which future growth depends.

That is what makes weak acceptance so hard to defend.

The industry often spends the most money on the layer that carries the greatest long-term exposure, then accepts that layer with limited evidence that it was installed in a way that protects the investment.

That is not capital discipline.

That is unpriced risk.

The false comfort of “it works”

One of the most dangerous phrases in infrastructure is:

“It works.”

The fiber passes a basic continuity test.

The customer comes online.

The insertion loss appears acceptable.

The route is closed out.

The asset is placed in service.

On paper, everything looks acceptable.

But a network can function on day one while still carrying defects that become expensive later.

A missing terminal may not break service immediately.

A weak demarcation point may not show up in a basic acceptance check.

A poorly protected pathway may not fail on day one.

A route with poor records may still activate customers.

A fiber can pass a basic continuity test while still carrying hidden weaknesses — macro-bends, poor splice quality, excess loss, or reflection events that may show up in OTDR results, degraded signal margin, or future trouble tickets.

The problem is not always visible at handoff.

And to be clear, not every future issue can be known at handoff. Infrastructure will always face weather, excavation damage, aging, customer behavior, animal damage, construction conflicts, and human error.

The goal is not perfect foresight.

The goal is to reduce the amount of risk that is knowable yet unverified.

Because if a defect is visible, recordable, measurable, or reasonably foreseeable, but no one records it, rates it, prices it, or assigns ownership to it, the defect does not disappear. It simply moves forward into operations.

Eventually, it returns as low light, repeat truck rolls, outage tickets, emergency repairs, customer escalations, longer mean time to repair, unplanned capex, or premature asset replacement.

By then, the original construction decision is often long forgotten.

The repair team inherits the symptom.

The finance team sees the overrun.

The customer feels the outage.

The asset record remains incomplete.

And the organization convinces itself that it has a maintenance problem.

Sometimes it does.

But often, it has a handoff problem.

The small saving that becomes a large liability

Many poor infrastructure decisions begin as reasonable attempts to control cost.

No executive wakes up and says, “Let’s weaken the future network.”

Teams are trying to manage capital, hit deployment targets, reduce unit cost, accelerate construction, and make the business case work.

That is understandable.

But capital discipline becomes dangerous when it is applied only to the construction window and not to the lifecycle of the asset.

A small avoided cost during deployment can become a recurring operating liability if it introduces fragility into the passive network.

Consider something as simple as skipping a terminal or failing to create a clean service interface.

During construction, the decision may appear to save money. The network still works. Customers can still be served. The build can still close.

But years later, every new drop, repair, check, or service activity may require technicians to open a handhole, disturb a splice case, handle live fiber, search for the correct fiber pair, and perform work in an environment that should have remained protected.

The organization may have avoided a small material cost, but it created repeated exposure to a much larger risk.

A missing terminal is not just a missing terminal. It is future handling risk.

A weak demarcation point is not just a design detail. It is ownership and accountability risk.

An inaccessible splice location is not just an inconvenience. It is mean-time-to-repair risk.

Poor records are not just administrative sloppiness. They are troubleshooting, locate, and damage-prevention risk.

This is where field details become financial signals.

The field technician may say:

“This design is bad. Every time we need to do routine work, we have to disturb the splice case.”

A finance leader may hear:

“Field teams are complaining about construction preferences.”

But translated into capital language, the same concern becomes:

“The asset was accepted with a maintainability defect that increases future repair exposure, extends restoration time, and raises the probability of lifecycle cost variance.”

That is the translation the industry needs.

Because once the relationship between field condition and financial consequence is lost, organizations start making decisions with incomplete truth.

A simple illustration

Imagine a deployment decision saves $200 by avoiding a terminalized access point.

That $200 may look responsible inside the construction budget.

But if the absence of that terminal causes just one additional truck roll every two years at $750 each, the direct repair cost alone erases the saving by year four. That does not include added handling time, customer disruption, degraded signal margin, repeat troubleshooting, or the increased risk created every time technicians disturb adjacent fibers that should have remained protected.

The larger issue is scale. Across 10,000 homes passed, a $200 avoided cost can look like a $2 million capex saving. But if the design increases routine handling, repeat dispatches, and degradation risk across the asset base, that “saving” can become a multi-year opex bleed.

The exact numbers will vary by network, geography, labor model, and access conditions.

But the pattern is the point:

A construction saving is not a saving if it transfers cost into the operating life of the asset.

Passive infrastructure carries future optionality

The passive network is not valuable only because it serves today’s customers. It is valuable because it preserves tomorrow’s options.

That is especially true in fiber.

The fiber in the ground is expected to outlive multiple generations of active equipment. Electronics may be refreshed every several years. Capacity may expand. New services may launch. Enterprise demand may grow. Data center connectivity may become more important. AI-driven workloads may increase bandwidth pressure in ways that were not fully modeled at the time of construction.

The passive layer is supposed to make those future upgrades economically efficient.

The business logic is straightforward:

Build the expensive buried layer once.

Keep it healthy.

Upgrade the active layer over time.

Use the same physical foundation to unlock future revenue.

But if the passive layer is compromised, that future optionality is weakened.

Poor installation does not simply create more repair work. It can reduce the owner’s ability to expand, upgrade, or monetize the network when demand increases.

That is where the cost becomes much larger than a repair invoice.

If the buried fiber becomes unreliable earlier than expected, the owner may be forced into major remediation or replacement just when it expected to benefit from a lower-cost electronics upgrade. Instead of using capital to expand capacity, improve service, or capture new demand, the company must spend capital repairing the foundation.

The network may still exist physically, but economically it has become constrained.

That is the long tail of poorly installed passive infrastructure.

The AI era raises the cost of weak foundations

For years, a degraded route or fiber cut may have been understood mostly as a service issue.

A customer lost internet.

A neighborhood had an outage.

A repair crew was dispatched.

The network was restored.

That was serious, but the economic story was often contained within customer experience, repair cost, and service-level performance.

The AI era changes the stakes.

As more compute capacity, data center interconnection, cloud infrastructure, edge workloads, and high-bandwidth services depend on reliable connectivity, the passive network becomes strategically heavier.

Fiber is no longer just a broadband access asset. It is part of the foundation for digital economies, AI infrastructure, cloud services, enterprise resilience, and national competitiveness.

That means the cost of weak passive infrastructure is rising.

A poorly installed route may not only create maintenance volatility. It may slow expansion, complicate capacity upgrades, reduce confidence in a corridor, and force operators to spend scarce capital fixing old defects instead of building new capability.

In a slower-demand environment, some hidden defects can remain tolerable for longer.

In an AI-driven infrastructure cycle, the margin for hidden weakness gets smaller.

The network either has the integrity to support future demand, or it becomes a constraint.

The hand-off problem

Most infrastructure organizations are good at measuring project completion.

They know whether the route was built.

They know whether customers can be activated.

They know whether the project closed.

They know whether the asset entered service.

But they are often weaker at measuring what kind of asset was actually handed over.

Was it maintainable?

Was it properly protected?

Were exceptions documented?

Were technical debts carried forward?

Were access dependencies understood?

Were ownership boundaries clear?

Were future repair risks visible?

Were records accurate enough to support future operations?

This is the handoff problem.

The moment of handoff is where construction reality becomes operating reality. If the asset is accepted with hidden defects, those defects become the responsibility of operations, finance, customers, and future capital plans.

By the time the problem becomes visible in repair data, the opportunity to fix it cheaply may have passed.

The best time to prevent long-tail infrastructure cost is before the asset is accepted.

Not after the third repeat.

Not after a customer escalation.

Not after emergency repairs accumulate.

Not after a route needs premature remediation.

At handoff.

That is where the truth should be captured.

Certification-grade acceptance

Infrastructure acceptance needs to evolve.

The question should not be:

“Does it work today?”

The better question is:

“Can this asset support the lifecycle economics, maintainability, resilience, and expansion assumptions used to justify the investment?”

That requires more than basic closeout paperwork.

It requires evidence.

Evidence of installation quality.

Evidence of location accuracy.

Evidence of depth and protection where required.

Evidence of proper access points.

Evidence of demarcation clarity.

Evidence of maintainability.

Evidence of standards compliance.

Evidence of exceptions.

Evidence of who accepted those exceptions and why.

Not every exception means the asset should fail acceptance.

Infrastructure is full of tradeoffs. There will always be constraints: terrain, permitting, access, cost, schedule, customer needs, commercial pressure, and local realities.

This is where infrastructure needs to borrow a concept from software: technical debt.

Technical debt is not always irrational. Sometimes teams accept a compromise because of cost, schedule, access, permitting, or commercial pressure.

The problem is not the existence of technical debt.

The problem is unrecorded technical debt.

The mature position is not:

“Fix everything immediately.”

The mature position is:

“Know what risk you are accepting.”

If an asset is accepted with a known maintainability issue, design exception, access constraint, or documentation gap, that decision should be explicit. It should be risk-rated, assigned, and carried forward into the lifecycle record.

Otherwise, the debt does not disappear.

It simply accrues interest in operations.

The tragedy of financed defects

The tragedy is not that infrastructure fails.

The deeper tragedy is that many failures are financed at birth.

They are created when an asset is accepted with hidden defects, weak evidence, poor maintainability, unclear ownership boundaries, or unresolved technical debt.

The money has already been spent.

The asset has already been capitalized.

The useful life has already been assumed.

The future upgrade path has already been modeled.

But the field reality was never fully certified.

That is what makes the long tail so costly.

The organization pays the big bill upfront, but does not always confirm that the asset received is capable of protecting that bill over time.

The passive layer carries the highest economic burden and the greatest future optionality. It should not enter service on trust alone.

It should enter service with proof.

The real cost of cutting corners

Executives trying to control deployment cost are not trying to harm the future business.

But systems often reward short-term construction efficiency while failing to price long-term operational exposure.

That is how reasonable decisions become expensive ones.

A skipped part.

A weaker method.

A poorly documented exception.

A fragile access design.

A shortcut that passes today’s test.

A closeout that hides tomorrow’s risk.

Each decision may seem small.

But passive infrastructure has a long memory.

The network remembers what was skipped.

Operations pays for what was ignored.

Customers experience what was deferred.

Finance eventually sees what was not priced.

And in an AI-driven infrastructure cycle, the cost of those decisions is getting higher.

The future will demand more from the passive layer, not less.

That means the industry can no longer afford to treat installation assurance as a back-office quality activity. It is capital protection. It is lifecycle risk management. It is the foundation for future expansion.

The passive network is where tomorrow’s growth is either protected or quietly compromised.

That is why “working today” is no longer enough.

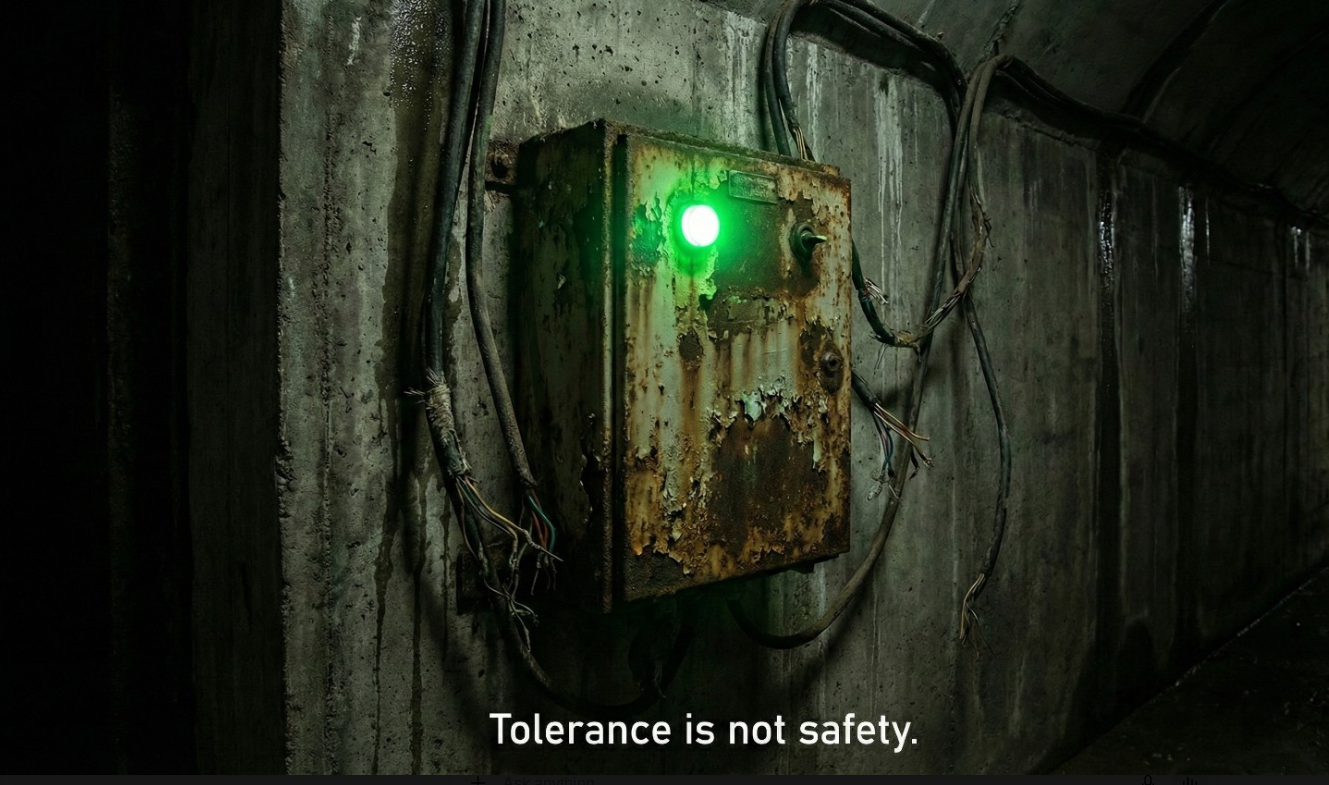

The System Was Born With the Defect

Some systems do not fail because something suddenly went wrong.

They fail because something was never quite right at the beginning.

The design was accepted with assumptions still unresolved. The build was closed with records that were good enough for the moment. The handoff moved forward because the project needed to be completed. The inspection found enough to proceed, but not enough to truly know. The asset entered service, customers were connected, revenue started flowing, and the organization moved on.

For a while, nothing obvious happens.

That is what makes the defect dangerous.

A system can be born poorly and still function for years. The early signs may not look like failure. They may look like minor inefficiencies, recurring explanations, unusual workarounds, small exceptions, or field knowledge that never makes it into the official record. The system works because people compensate. Teams adapt. Vendors explain. Operators patch the gaps. Leaders see the output and assume the foundation is sound.

But usefulness is not the same as health.

A system that works today may still be carrying a defect from the moment it was accepted.

The Problem With “Good Enough”

Operational systems are often born under pressure.

Projects have deadlines. Capital has been allocated. Crews need to move. Customers are waiting. Leadership wants completion. Contractors want closeout. Finance wants the asset placed into service. Everyone has a reason to move forward.

In that environment, “good enough” can feel practical.

The record is good enough.

The inspection is good enough.

The closeout package is good enough.

The asset location is good enough.

The handoff is good enough.

The exception is small enough to accept.

And sometimes, it is.

Not every imperfection is a crisis. Not every missing detail deserves executive attention. Operations would grind to a halt if every small issue became a formal escalation.

But there is a difference between tolerating imperfection and institutionalizing uncertainty.

That difference matters.

When a system is accepted without a clear record of what was built, where it was built, how it was verified, who verified it, and what exceptions were allowed, the organization has not merely accepted an operational gap. It has created future debt.

The problem is that the debt rarely comes due immediately.

It waits.

The Defect Hides Inside Normal Operations

A poorly born system can be surprisingly resilient in the short term.

That is because operational environments are full of human buffers. Experienced technicians remember where the real problem areas are. Local teams know which records cannot be trusted. Supervisors know which vendor needs extra watching. Engineers know which drawings require interpretation. Field crews know which routes are “always weird.”

None of this may show up cleanly in the system of record.

But the work continues.

This is how organizations confuse adaptation with resolution. The field learns to live with the defect, so leadership stops seeing it. Workarounds become normal. Exceptions become tribal knowledge. Recurring issues become “just how that area is.” The organization does not eliminate the gap. It simply learns how to survive around it.

Until the environment changes.

A new construction project begins nearby. A road widening exposes weak records. A utility conflict reveals that the asset is not where it was supposed to be. A vendor dispute forces everyone to prove what was done. A major outage requires fast isolation, but the records cannot support the decision. A merger brings two systems of record together and exposes how much was assumed. A margin squeeze removes the tolerance for repeated truck rolls, rework, and avoidable escalations.

Suddenly, the organization needs truth.

Not confidence.

Not memory.

Not “the vendor said.”

Not “we have always done it this way.”

Truth.

And that is when the original defect becomes visible.

Technical Debt Comes Due With Interest

Technical debt is often discussed as if it belongs mainly to software.

It does not.

Infrastructure carries technical debt. Operations carries technical debt. Field processes carry technical debt. Vendor ecosystems carry technical debt. Asset records carry technical debt. Every accepted uncertainty becomes a form of debt if the organization depends on that uncertainty later.

The danger is that operational debt compounds quietly.

A missing record today becomes a slower repair later.

A weak handoff today becomes a vendor dispute later.

An unverifiable installation today becomes a locate problem later.

A poorly documented repair today becomes a repeat outage later.

An accepted exception today becomes tomorrow’s margin leak.

At first, the organization absorbs the cost through effort.

Then through budget.

Then through customer experience.

Then through strategic constraint.

By the time the pain becomes visible, the question is no longer, “Why did this happen?”

The better question is, “When did we first accept the condition that made this possible?”

Often, the answer is uncomfortable.

It was there at the beginning.

Maturity Reduces the Room for Hidden Gaps

In growing markets, inefficiency can hide inside expansion.

When demand is strong, capital is flowing, and the priority is footprint growth, organizations can tolerate a surprising amount of operational looseness. The system is rewarded for moving fast. The gaps are real, but they are not yet decisive.

Mature markets are different.

Growth slows. Margins tighten. Competition increases. Capital becomes more selective. Customers expect reliability. Regulators demand more discipline. Investors ask harder questions. Consolidation begins to reveal what fragmentation once concealed.

This is when operators discover that the hidden gaps were never free.

They were just being financed by growth, tolerance, and momentum.

As industries mature, the cost of poor beginnings becomes harder to ignore. Organizations can no longer afford to keep rediscovering the same defects through outages, rework, claims, disputes, and emergency spending. The operating model has to become more disciplined because the market no longer provides enough slack to absorb avoidable uncertainty.

This is not just a broadband problem.

It shows up in utilities, transportation, data centers, energy, public infrastructure, supply chains, software platforms, and enterprise operations. Anywhere systems are built under pressure, accepted with incomplete truth, and then expected to perform for years, the same pattern appears.

The system works.

Until it is asked to prove itself.

Verification Is Not Bureaucracy

One reason organizations underinvest in verification is that verification is often framed as administrative overhead.

Another checklist.

Another review.

Another delay.

Another governance layer.

Another person asking for evidence when everyone already knows the work is done.

That framing misses the point.

Verification is not the enemy of speed. Poorly designed verification can be. Bureaucracy can be. Redundant approvals can be. But verification itself is not the problem.

Verification is how an organization protects the future from the assumptions of the present.

It gives leaders confidence that the asset, process, or system being accepted today will not become an expensive mystery tomorrow. It preserves institutional memory before it becomes tribal knowledge. It separates what was actually done from what was believed to have been done. It creates a record that can survive turnover, disputes, emergencies, audits, and time.

In that sense, verification is not just a control.

It is a resilience function.

The First Record Matters

The first record of a system has unusual power.

It becomes the baseline. It informs future decisions. It shapes maintenance assumptions. It influences risk models. It determines what teams believe is true. It becomes the reference point for every later investigation.

If that first record is weak, every future decision inherits that weakness.

The system may still function. It may even perform well enough for a while. But underneath the performance is a question that has not been answered:

Do we actually know what we have?

That question becomes more expensive the longer it is deferred.

Because once the system is live, every correction is harder. Roads are built. Customers are connected. Crews move on. Vendors change. People leave. Records drift. Conditions change. What could have been verified at birth must now be reconstructed through investigation, excavation, outage response, or costly rework.

That is the high-interest version of technical debt.

Closing the Gap Earlier

The lesson is not that every system must be perfect at birth.

That is unrealistic.

The lesson is that known uncertainty must be captured, governed, and carried forward honestly. If there is an exception, name it. If the record is incomplete, mark it. If the evidence is weak, do not pretend it is strong. If verification did not happen, do not let the system behave as though it did.

The most dangerous defects are not always the defects themselves.

They are the defects that enter the system disguised as completion.

That is where operational debt begins.

And that is where leaders have a choice.

They can keep accepting systems that appear ready but cannot prove their own condition. Or they can decide that the moment of birth matters: the handoff, the evidence, the inspection, the record, the verification, the standard.

Because the painful cliff rarely appears all at once.

It is built quietly through tolerated gaps.

And when the bill finally arrives, it usually includes interest.

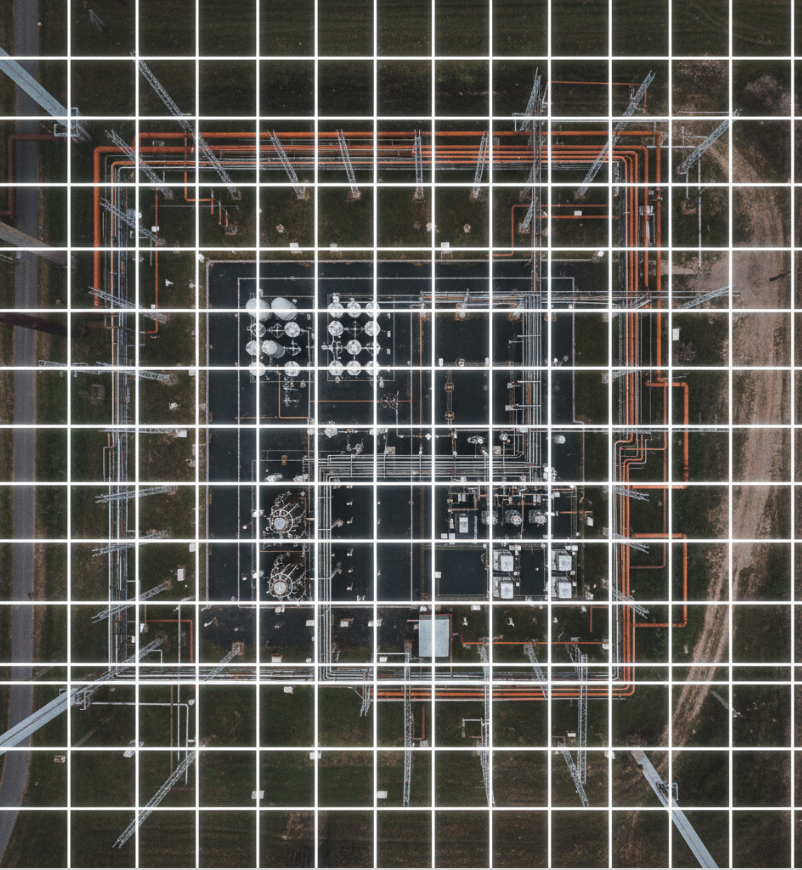

The Network Looked Healthy, Right up Until It Failed

Dashboards are green.

Metrics are within thresholds.

SLAs are being met.

But these signals reflect performance—not reality.

They tell you how the network is behaving. They don't tell you how it was built.

And that distinction matters.

Infrastructure doesn't fail when dashboards turn red. It fails when underlying conditions reach a breaking point:

shallow burial

poor installation practices

unverified field conditions

These are not captured in real-time performance metrics.

They sit quietly beneath the surface—until they don't.

By the time an issue appears in the NOC, the failure has already occurred.

The system is no longer preventing failure—it is reacting to it.

This creates a dangerous illusion: visibility begins to feel like control.

But measuring performance is not the same as verifying integrity.

True control starts earlier.

It starts at the point of deployment—where infrastructure is installed, validated, and recorded against a known standard.

With the tools available today—from digital twins to device-level verification—this is no longer theoretical. It is achievable.

The question is whether we continue to manage outcomes— or start verifying the conditions that create them.

Outcomes are what we measure. Conditions are what we control.

This is Part 3 in a series on infrastructure resilience.

By the time a network fails, the real mistake has already been made.It just hasn’t shown up yet.

Resilience was never optional. It was deferred.

Across markets, infrastructure failures are still treated as unexpected events.

A cable cut here.

An outage there.

A service disruption blamed on “external factors.”

But step back, and a pattern emerges.

Most of these failures were not accidents.

They were decisions.

Decisions to:

reduce burial depth to accelerate deployment

skip proper verification to meet timelines

defer quality checks to “later phases”

prioritize coverage metrics over build integrity

And “later” rarely comes.

Years down the line, the network begins to fail—not because it was unlucky, but because it was never built to last.

The cost shows up everywhere:

repeated truck rolls

rising maintenance spend

customer churn

reputational damage

We see it in capital efficiency—where churn erodes the value of past investment.

We see it in operating expense—through repeated truck rolls and revolving maintenance cycles.

And beyond the numbers, the impact runs deeper.

The price of deferring resilience is not easily reversed.

It compounds across the system—eroding performance, straining budgets, and exhausting operations teams.

With the tools available today—from digital twin modeling to automated compliance tracking—resilience is no longer a subjective trade-off.

It can be quantified, enforced, and verified before a single cable is laid.

The only remaining question is whether we treat it as a requirement—or as a regret.

Resilience was never optional.

It was deferred.

Neutral Infrastructure Requires a Trust Layer

Neutral infrastructure models are gaining traction.

The premise is straightforward:

Build shared infrastructure

Allow multiple operators to utilize it

Reduce duplication

Improve market efficiency

In theory, this creates independence.

In practice, it introduces a new problem.

When infrastructure is shared, responsibility becomes distributed.

One party builds

Another operates

Multiple entities depend on the same asset

No single participant fully owns:

verification

coordination

long-term integrity

This creates a structural gap.

When multiple operators share the same infrastructure, the asset is shared—but responsibility is not.

Each excavation, repair, or expansion introduces risk to every other participant.

Without coordination, what should create efficiency instead compounds vulnerability.

A neutral network is only as strong as the confidence placed in it.

Operators will ask:

Can this infrastructure be relied on?

Will outages be frequent?

Is the data describing the network accurate?

Without clear answers, participation slows.

Not because of lack of demand.

But because of uncertainty.

Neutral infrastructure initiatives often focus on:

ownership structure

access models

commercial frameworks

Less attention is given to:

verifiable as-built data

coordinated activity across stakeholders

measurable infrastructure integrity

These are not secondary concerns.

They determine whether the model works.

For a shared infrastructure model to scale, it requires:

independent verification of what is built

visibility into where infrastructure exists

coordination mechanisms across all participants

a system that reduces uncertainty over time

In other words:

A trust layer.

The Trust Layer is not theoretical—it emerges from the coordination systems already discussed: centralized registries, structured excavation protocols, and shared governance models.

Without this layer, the system begins to degrade:

repeated infrastructure damage

disputes between stakeholders

increasing operational costs

declining confidence in the asset

The model remains “neutral” in structure…

…but unstable in operation.

When trust is introduced as a system:

infrastructure becomes verifiable

risk becomes measurable

coordination becomes enforceable (over time)

participation becomes easier

Confidence replaces assumption.

Shared infrastructure is not defined by access alone.

It is defined by confidence.

From Shared Access to Shared Confidence—

that is the role of the Trust Layer.

SCALING NIGERIA’S DIGITAL BACKBONE:

WHY RESILIENCE MUST BE EMBEDDED FROM DAY ONE

Nigeria stands at a pivotal moment in its digital evolution. With the launch of Project BRIDGE and the ambition to expand the national fiber backbone from approximately 35,000 kilometers to 125,000 kilometers, the country has a once-in-a-generation opportunity to redefine its digital infrastructure landscape. Programs of this magnitude do more than extend connectivity — they shape economic resilience, enterprise confidence, and long-term capital preservation.

For infrastructure at this scale, resilience must be embedded at the foundation, not layered on after deployment.

Drawing from global telecommunications and cloud infrastructure governance experience, large-scale backbone programs tend to succeed not only through capital deployment, but through early institutional alignment. When resilience architecture is embedded at the design stage, lifecycle stability improves materially and capital risk declines over time.

The Scaling Paradox

Large infrastructure programs face a common paradox: the urgency to deploy often outpaces the systems designed to protect what is being deployed.

Fiber expansion is visible. Governance architecture is not.

Yet in high-growth environments, fragility rarely stems from a lack of cable. It stems from insufficient coordination, inconsistent standards, and the absence of integrated accountability mechanisms.

When deployment accelerates without synchronized resilience protocols, maintenance risk compounds. Over time, operational instability erodes both public confidence and investor return.

For a program backed by international financing and structured through a public-private partnership model, long-term asset durability is as important as rollout velocity.

Why Deployment Standards Alone Are Not Enough

Physical design matters — burial depth, conduit quality, aerial corridor utilization, redundancy topology. But physical decisions alone do not guarantee resilience.

In rapidly expanding markets, fiber disruption frequently results from:

Uncoordinated excavation activity

Incomplete asset mapping

Weak contractor accountability

Fragmented right-of-way enforcement

Absence of harmonized “call-before-you-dig” systems

These are not engineering failures. They are governance design gaps.

As scale increases, so does the importance of institutional alignment.

A National Fiber Resilience Architecture

To protect capital, reduce lifecycle maintenance costs, and strengthen enterprise trust, resilience can be institutionalized through a structured governance framework.

Four foundational components merit consideration:

1. Standardized Physical Deployment Protocols

Clear burial depth specifications

Mandated multi-duct conduit use in high-density corridors

Defined criteria for aerial vs. buried deployment

Independent quality inspection during construction

Standardization ensures that resilience is not dependent on contractor interpretation.

2. Centralized Digital Plant Registry

A national GIS-based backbone registry should not exist in isolation. For resilience to be effective, the registry must be interoperable across federal and state ministries, road construction authorities, and utility providers.

Core components may include:

Unified backbone route mapping

Integration with excavation permitting systems

Real-time update requirements for route modifications

Cross-utility data sharing between fiber, power, and public works agencies

If the SPV maintains one map while state ministries operate from another, infrastructure coordination gaps will persist. Interoperability ensures that fiber route data is embedded directly into excavation workflows, reducing accidental disruption during road expansion or utility upgrades.

Digital visibility must translate into operational synchronization.

3. Excavation Governance & Accountability Framework

In large-scale infrastructure programs, resilience risk often concentrates at the last operational layer — where sub-contractors execute trenching and utility modifications.

Addressing what might be termed “last-mile liability” requires clearly defined accountability structures, including:

Mandatory locate verification prior to excavation

Contractor certification tied to compliance history

Defined incident reporting protocols

Graduated penalty mechanisms for negligent damage

As deployment spans multiple states, harmonizing Right-of-Way (RoW) enforcement and excavation governance across jurisdictions will be critical. Resilience must be consistent across state lines.

4. Lifecycle Audit & Resilience Oversight

Resilience is not a one-time deployment feature. It is an ongoing discipline.

Embedding:

Annual infrastructure resilience reviews

Incident-rate transparency reporting

Cut-frequency reduction targets

SPV-level accountability metrics

This ensures that governance scales alongside network expansion.

Why This Matters For Capital Preservation

Project BRIDGE is structured as a public-private partnership with international development financing. In such models, asset durability directly influences:

Investor confidence

Cost of capital

Enterprise adoption rates

Long-term return stability

Resilience is not a delay mechanism. It is a capital protection strategy.

Embedding governance at the SPV charter and operational policy level before large-scale trenching begins ensures that institutional controls mature alongside physical expansion.

Retrofitting resilience after deployment is materially more expensive — financially and politically.

The Opportunity Before Construction Accelerates

Nigeria has a rare window of alignment:

Political will

International financing

Private sector participation

National digital ambition

National digital ambition, all focused on a country with a young, dynamic population eager to participate in the digital economy. This is all aligned around a nation with one of the world’s youngest and most dynamic populations, increasingly integrated into the digital economy. As construction phases intensify, this may be the most effective moment to formalize resilience governance frameworks that will serve the country for decades.

The objective is not to slow deployment.

It is to ensure that 125,000 kilometers of fiber becomes a durable national asset rather than a maintenance-intensive liability.

Digital backbone expansion is foundational to enterprise performance, financial system reliability, cloud adoption, and long-term economic competitiveness.

Resilience must therefore be treated not as a secondary operational function, but as a structural design principle. As Project BRIDGE transitions from structuring to execution, formalizing a National Fiber Resilience Architecture within the SPV’s operating framework may represent one of the most impactful early governance decisions.

This alignment makes the case for getting resilience right not only financially prudent, but strategically consequential for Nigeria’s long-term economic competitiveness.

Protecting Nigeria’s Fiber Backbone:

Harmonizing Excavation Governance for Long-Term Resilience

As Nigeria’s national fiber expansion accelerates, the geometry of infrastructure risk evolves. The simple line of a trench in one state becomes a point of vulnerability for a network segment hundreds of kilometers away.

The extension of backbone routes across multiple states will naturally coincide with increased road construction, utility trenching, private development, and urban density growth. In such environments, long-term network durability depends not only on engineering standards, but on coordination maturity across jurisdictions.

In the early phases of deployment, system stability often reflects construction quality. Over time, however, operational resilience becomes increasingly tied to governance alignment — particularly in excavation management and Right-of-Way oversight.

As buried infrastructure density increases, fragmented excavation protocols can introduce compounding exposure. This is not an engineering shortfall. It is a coordination challenge inherent in multi-jurisdiction infrastructure systems.

Excavation Governance as Lifecycle Capital Protection

Across global backbone networks, excavation-related damage remains one of the most common sources of service disruption. While initial deployment periods may appear stable, long-term cost predictability depends on harmonized governance mechanisms that mature alongside network complexity.

As utility overlap expands and urban activity intensifies, inconsistencies in excavation notification, documentation, or enforcement can gradually influence:

Maintenance intensity

OPEX volatility

Insurance exposure

SLA performance stability

Enterprise confidence

In national backbone systems designed to operate over multiple decades, early governance alignment often proves more consequential than incremental deployment acceleration.

These pressures may not typically surface immediately. As operational complexity increases, their effects compound.

In large-scale public-private partnership environments, such volatility directly affects asset durability and margin predictability. Excavation governance, therefore, should be viewed not as administrative overhead, but as preventative capital allocation embedded into lifecycle economics.

Federal Baseline, State Augmentation: Designing for Alignment

National backbone programs operating within federal systems must balance two legitimate realities:

The need for standardized infrastructure protection mechanisms

The importance of preserving state-level nuance and jurisdictional authority

As deployment spans multiple Nigerian states, a federally defined resilience baseline could establish minimum excavation protection standards while allowing states to augment requirements based on local terrain, density, or environmental considerations.

Such a baseline may include:

Mandatory pre-excavation locate verification

Standardized GIS integration requirements

Defined burial depth minimums

Incident reporting protocols

Contractor certification criteria

States would retain authority to enhance these protections.

The objective is not centralization. It is harmonization.

Where deviations from baseline standards are proposed, structured exception review mechanisms can help evaluate system-wide exposure implications. In interconnected backbone systems, localized adjustments must be assessed within the context of the entire chain. Variability in excavation oversight within one jurisdiction can introduce disproportionate exposure to adjacent network segments, particularly where redundancy routes intersect.

National backbone infrastructure functions as an interconnected system. Its durability depends not solely on the strength of individual segments, but on governance consistency across every link.

Embedded Governance Into Digital Workflow

As backbone registries mature, excavation governance can evolve beyond manual notification models.

Where permitting systems are digitally linked to a centralized GIS backbone registry — as proposed in the foundational resilience architecture — locate verification can become an automated workflow requirement rather than a discretionary procedural step.

In such models:

Excavation permits are cross-checked against backbone route data before approval

Route conflicts are flagged within the permitting platform

Compliance documentation is digitally logged

As-built updates are synchronized in real time

This creates a closed governance loop in which asset visibility, permit issuance, and compliance verification reinforce one another.

Over time, digital integration reduces reliance on individual procedural memory and strengthens institutional consistency.

International Precedent: Harmonized Excavation Governance in Federal Systems

Federal systems globally have recognized excavation governance as a foundational protection mechanism for buried infrastructure.

In the United States, the 811 “Call Before You Dig” framework establishes a nationally recognized pre-excavation notification standard across all states, while preserving state-level enforcement authority. This creates shared compliance norms across diverse jurisdictions.

In the United Kingdom, centralized platforms such as Linesearch BeforeUdig integrate infrastructure visibility directly into excavation workflows, improving coordination between contractors and asset owners.

Similar coordinated notification systems operate across parts of Europe and Australia, reflecting a broader institutional recognition: as underground infrastructure density increases, interoperability and standardized locate protocols become essential to long-term asset stability.

These systems vary in implementation detail. What they share is structural alignment — harmonized baseline protection mechanisms operating within jurisdictional diversity.

As Nigeria’s backbone expansion advances, comparable coordination principles may become increasingly relevant to preserving lifecycle cost stability.

Accountability Beyond Documentation

Governance strength is not defined by written standards alone.

It is demonstrated through enforcement rigor and compliance verification.

Clear excavation protocols without inspection architecture can lead to gradual enforcement drift — particularly as contractor turnover increases and operational focus shifts over time.

Independent quality oversight mechanisms — similar to third-party inspection models used in global infrastructure programs — may strengthen resilience assurance. These can include:

Randomized trench depth verification

Digital documentation of as-built compliance

Spot audits across jurisdictions

Compliance history tracking

Standardized incident transparency reporting

In addition to structured penalty mechanisms for negligent damage, resilience frameworks may benefit from positive incentive structures.

Contractors demonstrating consistent compliance, low incident frequency, and documented adherence to excavation protocols could be recognized through preferred contractor status designation. Over time, such recognition can translate into competitive advantage in procurement processes. For example, a contractor with a Tier-1 compliance history could qualify for a streamlined bidding process or a reduced performance bond requirement, directly linking discipline to improved business terms. This reinforces resilience as a market differentiator rather than solely a compliance obligation.

This balance between accountability and incentive alignment strengthens governance durability.

Institutional Alignment Within The SPV Framework

Large-scale backbone deployment is visible. Governance maturity is not.

Yet as infrastructure density increases and multi-state coordination intensifies, long-term durability depends increasingly on institutional alignment rather than construction strength alone.

As Project BRIDGE advances into execution phases, embedding excavation governance maturity within the SPV’s operating framework may prove decisive in preserving capital stability. The SPV structure provides the operational locus through which harmonized baseline standards, structured exception review processes, digital registry integration, inspection oversight, and performance transparency can be institutionalized.

The objective is not to slow development activity or constrain state authority. It is to ensure that 125,000 kilometers of interconnected fiber behaves as a coordinated national asset over decades of economic use.

Operational durability is preserved not only through engineering design, but through governance that matures at the same pace as complexity.

For Project BRIDGE, resilience at scale will not be defined by deployment velocity alone. It will be determined by whether governance maturity is embedded within the SPV architecture before network complexity compounds.

RESILIENCE AS CAPITAL STRATEGY

STRENGTHENING THE SPV OPERATING FRAMEWORK

Large-scale backbone expansion is often discussed in terms of connectivity, access, and deployment velocity.

In public-private infrastructure models, however, resilience carries broader implications. It is not solely an operational attribute. It is a determinant of capital durability, lender confidence, and long-term return stability.

As Nigeria advances Project BRIDGE through structured financing and private-sector participation, the operating maturity of the Special Purpose Vehicle (SPV) becomes central to preserving asset value over decades of economic use.

Resilience, in this context, is not an ancillary technical consideration. It is a capital strategy.

Infrastructure as a Multi-Decade Financial Asset

National fiber backbones are designed to operate across multi-decade horizons. Their value lies not only in initial deployment, but in sustained performance under increasing complexity.

In such systems, long-term asset stability influences:

Margin predictability

OPEX volatility

Service reliability

Enterprise adoption rates

Insurance exposure

Financing flexibility

Operational disruption, governance inconsistency, or incident-rate volatility can introduce risk signals that extend beyond maintenance cost. They influence capital perception.

In infrastructure finance, perceived volatility is often penalized more heavily than moderate baseline risk. Predictability, transparency, and institutional discipline strengthen long-term financing conditions.

Resilience maturity, therefore, should be viewed as a financial stabilizer — not merely an engineering safeguard.

The SPV as Institutional Architecture

The SPV structure is more than a vehicle for capital deployment. It is the institutional architecture through which governance maturity must be embedded.

As Project BRIDGE advances into execution phases, the SPV operating framework may serve as the central locus for:

Harmonized baseline protection standards

Structured exception review mechanisms

Centralized GIS registry governance

Independent inspection oversight

Contractor performance scoring systems

Incident-rate transparency protocols

Structured exception review serves a dual function. Beyond assessing localized exposure, it mitigates the risk of gradual governance drift — where incremental deviations, if left unexamined, can erode system-wide protection standards over time. By anchoring deviations within a centralized review framework, the SPV reinforces baseline consistency across jurisdictions and protects long-term asset durability.

Embedding these elements within the SPV framework ensures that governance alignment scales alongside physical deployment. Absent institutional anchoring, resilience mechanisms risk becoming fragmented across jurisdictions, weakening long-term consistency.

The SPV provides the structural mechanism through which harmonization can be sustained.

Capital Exposure and Governance Volatility

In multi-jurisdiction backbone systems, governance variability can translate into financial exposure.

Fragmented enforcement or inconsistent oversight may gradually influence:

Maintenance intensity

SLA credit obligations

Insurance claims frequency

Emergency repair mobilization costs

Enterprise confidence

These factors do not necessarily destabilize projects immediately. Over time, however, they can introduce volatility that affects margin predictability.

For international development finance partners and long-horizon infrastructure stakeholders, volatility is a material consideration. Stability in reporting, incident reduction, and compliance maturity strengthens capital confidence.

In contemporary infrastructure financing models, resilience and governance metrics are increasingly embedded within ESG-linked or sustainability-linked covenant structures. Where operational transparency and risk-reduction mechanisms are demonstrably robust, financing terms may reflect reduced perceived exposure.

For the SPV, institutionalizing excavation governance, compliance reporting, and incident-rate transparency may therefore contribute not only to operational stability, but to improved refinancing flexibility and long-term capital efficiency.

Resilience governance is therefore inseparable from capital preservation.

Resilience Reporting as Transparency Signal

One mechanism through which resilience maturity can be institutionalized is structured reporting.

A centralized Resilience Performance Dashboard housed within the SPV framework may include:

Excavation incident frequency trends

Compliance audit outcomes

Exception review approvals and justifications

Contractor performance tiering

Cut-reduction trajectory benchmarks

Cross-jurisdiction coordination metrics

Such reporting provides visibility into governance maturity beyond deployment statistics.

Transparent performance tracking signals institutional discipline to capital stakeholders. It reinforces ESG alignment, strengthens accountability, and provides measurable indicators of operational durability.

Resilience, when reported systematically, becomes auditable rather than assumed.

Embedding Alignment Before Complexity Compounds

Early phases of deployment often reflect construction strength. Long-term asset stability reflects governance maturity.

As backbone density increases and multi-state coordination intensifies, the importance of institutional alignment grows accordingly.

Embedding resilience governance within the SPV operating framework — before operational complexity fully compounds — may represent one of the most consequential capital preservation decisions available at this stage of Project BRIDGE.

Resilience at scale will not be defined by deployment velocity alone.

It will be determined by whether governance maturity, transparency, and accountability are institutionalized early enough to sustain performance over decades.

In national backbone systems, durability is engineered once — but preserved continuously.

Capital strategy and resilience strategy, therefore, must operate as one.

Why The Fix Is Known — And Still Not Chosen

The Invisible Wall

By the time most people reach this point, the question feels obvious.

If the risks are visible,

if the failure modes are understood,

if the incentives are misaligned but not mysterious,

then why doesn’t anything change?

The uncomfortable answer is this:

the fix is not missing.

It is simply not chosen.

The Myth of Ignorance

We often assume systems persist because decision-makers don’t understand them.

That assumption is comforting. It implies that insight alone could unlock change.

But in most operational systems, that isn’t true.

The mechanics are understood.

The trade-offs are named privately.

The technical debt is visible to those closest to it.

What’s absent is not awareness — it’s incentive alignment.

When Knowing Isn’t Enough

Most of the improvements that would stabilize the system are well known:

more accurate categorization instead of compressed averages

ranges instead of point estimates

capacity buffers instead of perpetual stretch

resilience designed into the system instead of extracted from people

None of this is exotic.

What is expensive is who pays for it — and when.

These fixes demand:

upfront cost

organizational humility

time horizons longer than most tenures

acceptance of lower short-term flexibility

They require someone to absorb pain now so that others can benefit later.

That is rarely how power is structured.

The Asymmetry That Holds Everything in Place

Today’s system functions because risk is displaced, not resolved.

Providers push variance downstream.

Vendors absorb uncertainty to stay alive.

Operators compensate with judgment and care.

As long as someone else is holding the load, the system appears to work.

Dashboards remain green.

Narratives remain intact.

Replacement remains easier than repair.

From a distance, endurance looks like health.

The Role of Time

Time is the quiet enforcer.

Leaders nearing the end of their tenure can often outrun the consequences of today’s decisions. Investors operating on fixed horizons are rewarded for extracting value before fragility surfaces. Vendors cannot pause to renegotiate the structure that feeds them.

Everyone is behaving rationally.

Just not collectively.

The system rewards those who move fastest — not those who make it last.

Why Change Rarely Comes Voluntarily

Meaningful reform doesn’t usually emerge from consensus or goodwill.

It emerges when the cost of not fixing the system finally exceeds the cost of doing so.

When vendor churn accelerates faster than replacement.

When judgment drains out faster than training can replace it.

When automation amplifies errors instead of smoothing them.

When the system can no longer hide where resilience was coming from.

Until then, the invisible wall holds.

This Is Not Cynicism

This isn’t an argument that people don’t care.

It’s an explanation of why caring isn’t enough.

The barrier isn’t intelligence or morality.

It’s incentive gravity.

Systems move in the direction they are rewarded to move — even when the destination is known to be wrong.

What Naming the Wall Does

Naming this dynamic doesn’t fix it.

But it does something quieter and more important.

It stops mistaking silence for ignorance.

It stops blaming individuals for structural outcomes.

It restores agency to those who understand the system but feel powerless to change it alone.

It reframes frustration as clarity.

And clarity is often the first thing a system needs — long before it is ready to act.

Where This Leaves Us

The fix has never been hidden.

It has simply been larger, slower, and more uncomfortable than the system was willing to choose.

For now.

Acceleration Without Understanding

The AI View

When systems strain, organizations reach for something superior.

A new leader with pristine credentials.

A new platform proven elsewhere.

A new framework that promises clarity where ambiguity has grown uncomfortable.

AI arrives into this exact pattern — the final and most powerful iteration of the borrowed playbook.

The Old Reflex, Upgraded

This instinct isn’t new.

When complexity overwhelms existing structures, the response has always been substitution:

replace judgment with authority

replace experience with process

replace ambiguity with certainty

AI simply upgrades the mechanism.

It doesn’t arrive as a suggestion.

It arrives as inevitability.

Because unlike previous interventions, AI doesn’t just support decision-making — it threatens to replace it.

What AI Actually Does

AI does not understand systems.

It recognizes patterns.

Those patterns are derived from historical data, encoded assumptions, and prior decisions — many of which were themselves shaped by incomplete models, mispriced resilience, and displaced risk.

This distinction matters.

AI doesn’t generate new judgment.

It amplifies the judgment already embedded in the system.

If the inputs reflect a healthy model, AI accelerates effectiveness.

If the inputs reflect a distorted one, AI accelerates failure.

Confidently.

Consistently.

At scale.

The Temptation to Predict Variance

In ops-heavy environments, the promise of AI is seductive.

If humans struggle with variance, perhaps models can predict it.

If judgment is inconsistent, perhaps algorithms can normalize it.

If forecasting fails, perhaps more data will make it precise.

But this misunderstands the problem.

Variance is not a data deficiency.

It is a property of the physical world.

Trying to predict every raindrop doesn’t remove the rain.

It just distracts from building better umbrellas.

When Escape Hatches Disappear

Historically, systems survived their own flaws because humans compensated.

Operators adjusted.

Vendors improvised.

Leaders exercised discretion.

These were escape hatches.

They were informal, imperfect, and unscalable — but they allowed the system to bend rather than break.

AI removes those escape hatches.

Decisions become algorithmic, not discretionary.

Failures become systemic, not local.

Responses become acceleration, not reflection.

When the model fails, it fails everywhere at once.

Judgment Is a Use-It-or-Lose-It Capability

There is a deeper cost that rarely appears in business cases.

Judgment atrophies.

When technicians defer to prompts instead of principles, the organization slowly loses its operational brain. Skills that once lived in people are externalized into systems that cannot improvise when reality deviates — which it always does.

When the system finally fails, there is no one left who knows how to fix it without a screen.

That is not efficiency.

That is fragility with confidence.

The Illusion of Objectivity

AI feels neutral.

It doesn’t argue.

It doesn’t tire.

It doesn’t escalate emotionally.

This makes its outputs feel authoritative — even when they are wrong.

And because AI reflects institutional assumptions back to leadership with mathematical certainty, it becomes harder to question the model than to question the people it replaces.

The organization doesn’t become smarter.

It becomes more convinced.

What AI Cannot Fix

AI cannot repair misaligned incentives.

It cannot lengthen time horizons.

It cannot price resilience correctly.

It can only operate within the system it is given.

And if that system was already extracting resilience from people, AI will simply do it faster.

The Real Choice

The question is not whether AI belongs in operations.

It does.

The question is whether organizations are willing to understand the system — its time constants, its human buffers, its irreducible variance — before asking a tool to make it run faster.

Acceleration without understanding doesn’t solve fragility.

It completes it.

Where This Leaves Us

AI is not the villain.

It is the mirror.

It reflects the system’s assumptions back at scale — with speed, confidence, and no regard for consequence.

Whether it becomes a force for resilience or collapse depends entirely on what the system brings to it.

That choice is still open.

For now.

When The Playbook Breaks

The Process View

When systems begin to strain, organizations do the responsible thing.

They bring in experts.

Consultants arrive with experience from environments where scale is clean, variance is bounded, and outcomes can be normalized. Frameworks are introduced. Playbooks are borrowed. Processes are standardized.

None of this is foolish.

It is rational.

And it often works — just not here.

The Appeal of the Borrowed Playbook

Most modern process frameworks were forged in industries where work is:

digital

repeatable

reversible

statistically stable

In those environments, averages are meaningful. Variance is noise. Optimization is additive.

Operations-heavy industries look similar from a distance. Work can be categorized. Costs can be averaged. Performance can be benchmarked.

So the instinct is natural:

compress the system into something legible.

Where Reality Refuses Compression

In physical operations, variance is not noise.

It is the work.

Consider three jobs that appear identical on paper: replacing a 300-foot damaged fiber segment.

One is aerial — straightforward, two bucket trucks, minimal disruption.

One is buried in dirt — trenching, locates, permits, traffic control.

One is under asphalt with rock — boring, rock adders, restoration, staging, and risk.

From a spreadsheet perspective, these are the same unit.

From reality’s perspective, they are different species.

Costs range from thousands to tens of thousands.

Timelines diverge.

Risk profiles explode.

The average doesn’t describe the work — it describes a scenario that does not exist.

The Seduction of Precision

Faced with this variance, organizations don’t retreat from modeling.

They double down.

More software.

More fields.

More categories.

More enforcement.

The goal becomes precision.

But precision is not the same as accuracy.

When tools demand a single point instead of a range, the system is forced to lie — politely, consistently, and with confidence.

A buried segment in dirt is predictable.

A rock vein is not.

To the process, that’s a variance request.

To the P&L, it’s a structural break.

Vendors Feel This First

Nowhere is this tension felt more acutely than on the vendor side.

In the build phase, forecasting is easier. Quantities are known. Footage is planned. Resources can be staged.

Operations are different.

Vendors are asked to price work without guaranteed volume, while carrying fixed costs for equipment, labor, insurance, and compliance.

Price too high, and you lose the work.

Price too low, and you subsidize it.

Meanwhile, providers — facing their own margin pressure — push rates down further, assuming efficiency can compensate for uncertainty.

Nothing about the physics changes.

Only who absorbs the variance.

When Judgment Becomes the Liability

As process rigidity increases, something else quietly shifts.

Judgment is replaced with compliance.

Ranges are replaced with targets.

Experience is replaced with enforcement.

This isn’t just an efficiency move — it’s a trust signal.

When we replace judgment with metrics, we aren’t simply improving accountability.

We are signaling that we no longer trust the person closest to the work.

That trust debt compounds.

Judgment is a use-it-or-lose-it capability.

When it atrophies, the system loses its ability to respond when reality deviates — which it always does.

When failure finally arrives, no one knows how to fix it without a screen.

Why the Playbook Breaks

The playbook doesn’t fail because it’s poorly designed.

It fails because it assumes homogeneity in a system defined by variance.

It assumes reversibility in a system where mistakes harden into concrete and asphalt.

It assumes optimization is local when costs propagate nonlinearly.

And it assumes that what worked elsewhere will work again — if only enforced tightly enough.

At that point, process stops serving the system.

The system starts serving the process.

What Actually Breaks

The system doesn’t collapse when a model is wrong.

It collapses when everyone knows the model is wrong — and continues to use it anyway.

Trust erodes.

Vendors churn.

Operators disengage.

Margins thin.

Not because people are incompetent — but because the framework cannot hold the work it is being asked to describe.

This is not a failure of execution.

It is a failure of fit.

The Setup for Acceleration

When process fails, the instinct is not reflection.

It is acceleration.

If humans can’t manage the variance, perhaps machines can.

If judgment is unreliable, perhaps prediction can replace it.

That belief sets the stage for the next act.

What The Broadband Industry Misunderstood About Itself

The Capital and Industry View

The broadband industry did not fail in the way failure is usually measured.

Coverage expanded. Speeds increased dramatically. Capital flowed. Millions of households gained access to infrastructure that did not exist a decade earlier. By any build-phase metric, broadband was a success.

That is not the failure this essay examines.

The failure was subtler — and only becomes visible once the industry crossed from building infrastructure to operating essential systems.

What broadband misunderstood about itself was not how to deploy fiber, attract capital, or compete on speed. It misunderstood the kind of system it was becoming once expansion slowed and durability mattered more than velocity.

The industry succeeded at construction.

It struggled with transition.

Speed Was Rational — and Still Incomplete

In its growth phase, broadband optimized relentlessly for speed.

This was not recklessness. It was necessity.

Competition intensified. Capital flowed. New entrants emerged. Differentiation moved quickly from availability to bandwidth, from bundles to gigabit promises. Deployment velocity became existential.

Incumbents raced to defend territory. New providers raced to claim it. Middle-mile operators formed. Vendors scaled. The system expanded at full throttle.

In that moment, speed was not a mistake.

It was the only move that made sense.

But optimization always comes with a trade.

What broadband optimized for was deployment velocity, not operational durability.

The cost of that trade was deferred.

Early Builders Paid the Pioneer Penalty

The first wave of builders paid for learning at scale.

They invested in architectures, tooling, labor models, and training programs based on the best information available at the time. Engineering assumptions hardened into standards. Field practices became institutionalized.

Years later, manufacturers simplified deployment through pre-connectorized fiber, modularized components, and reduced the need for labor-intensive splicing that incumbents had already built entire organizations around.

The industry became more efficient — but not uniformly.

New entrants inherited cheaper, simpler deployment models. Early builders carried sunk costs that could not be unwound without disrupting live systems.

The market did not reward experience.

It rewarded timing.

Infrastructure Is a Long Game — Capital Often Is Not

Broadband is fundamentally a long-lived asset.

Fiber plants are designed to last decades. Returns accrue slowly. Value is realized through durability, not velocity.

But much of the capital flowing into the industry operated on a very different clock.

Three- to five-year horizons. Quarterly narratives. EBITDA projections that assumed operational stability as a given rather than a design requirement.

This created a structural mismatch.

A 30-year asset was being managed under short-money expectations.

Not because investors were malicious — but because their tools, incentives, and pattern libraries were built for industries where variability is lower and reversibility is higher.

Broadband is neither.

Operations Was Treated as Elastic

As the build phase tapered and the industry shifted into operations and maintenance, something subtle happened.

Operational resilience was assumed to be flexible.

Costs could be squeezed. Vendors could be rotated. Teams could be leaned out. Judgment could be standardized.

Margins tightened, but dashboards stayed green — for a while.

What those dashboards could not show was where the resilience was coming from.

It was not embedded in the system.

It was embedded in people.

Operators absorbed ambiguity. Vendors stretched capacity. Tribal knowledge filled gaps the models did not capture.

Resilience was not designed.

It was extracted.

The Convergence Problem

The system did not fail abruptly.

Multiple stabilizers were eroding at different rates:

workforce experience thinning

vendor margins compressing

maintenance backlogs growing

capital expectations hardening

Each erosion felt survivable in isolation.

The miscalculation was assuming they would not converge.

But systems do not fail when one leg weakens.

They fail when enough legs weaken at once.

At that point, the chair cannot stand — not because everything collapsed, but because too much erosion aligned.

The industry did not run out of intelligence.

It ran out of slack.

Mispriced Resilience

Resilience was priced — just not correctly.

Leaders believed they had more time.

They believed erosion would remain staggered.

They believed experience could be transferred, standardized, or replaced.

What they underestimated was that tribal knowledge is not a scalable asset.

It does not transfer cleanly.

It does not appear on balance sheets.

And once lost, it does not regenerate on demand.

Elasticity only works when systems are allowed to recover.

Held under constant tension, it becomes brittle.

What Was Actually Misunderstood

The broadband industry misunderstood one core truth about itself:

It is not a speed business.

It is a durability business that requires speed upfront.

Those are not the same thing.

When durability is treated as free, it eventually becomes scarce.

When resilience is extracted instead of designed, it eventually disappears.

The result is not collapse.

It is fragility disguised as performance.

This Is Not an Anomaly

What we are seeing now is not a surprise.

It is the system behaving exactly as it was built to behave once the conditions changed.

The next phase will test something deeper:

whether borrowed processes, borrowed capital models, and borrowed acceleration tools can function in a system defined by physical reality, irreducible variance, and long time constants.

That question is still unfolding.

The Silence Between Signals

The Leadership View

From the leadership seat, the story looks different.

The warnings don’t arrive cleanly or in isolation. They arrive amid budget reviews, headcount constraints, board expectations, and an endless stream of competing priorities—each one urgent, each one defensible.

Every day brings more signals than any individual or team can fully absorb.

Most leaders are not ignoring risk.

They are prioritizing under constraint.

And that distinction matters.

The Signal-to-Noise Problem

As organizations scale, leaders stop receiving information as events and start receiving it as patterns.

Individual risks blur together. Each escalation competes with dozens of others. Everything feels important, which paradoxically makes it harder to act on anything decisively.

From this vantage point, deferral feels rational.

“We’ll revisit next quarter” isn’t dismissal.

It’s triage.

But triage assumes something critical:

that the system can safely hold what has been deferred.

That assumption is rarely examined.

When Decisions Are Made Quietly

In many cases, leadership does make a decision.

Capital is allocated elsewhere.

Resources are directed toward higher-visibility initiatives.

A calculated bet is placed that the system can tolerate degradation for a while longer.

What’s missing is not intent—but articulation.

Risk acceptance often remains implicit. The time horizon is undefined. The tolerance threshold is unspoken. And the ownership of consequence remains ambiguous.

From the leadership seat, the decision feels settled.

From the operator’s seat, it feels like nothing was decided at all.

That gap is not a communication failure.

It’s a structural one.

Narrative Pressure and Time Compression

Leadership operates under a different kind of load.

Dashboards roll up. Board decks flatten nuance. Performance is measured in quarters, not conditions. Explanations must fit into slides, not field reports.

This creates narrative pressure.

Green metrics become proxies for health. Variance becomes something to “manage,” not something to sit with. Ambiguity becomes a liability.

Under this pressure, leaders don’t eliminate risk.

They compress it.

And compression favors what can be reported over what can be felt.

The Illusion of Control

The danger isn’t that leaders are cynical.

It’s that the system rewards confidence.

When something goes wrong, the question isn’t “what did we assume?”

It’s “why didn’t this get escalated harder?”

This reframing subtly shifts accountability downward.

Risk that was consciously tolerated becomes failure that was insufficiently prevented. And because the original decision was never named, the system has no memory of having chosen the outcome.

This is how leadership feels blindsided by events they implicitly approved.

Where Trust Quietly Erodes

Trust doesn’t break when trade-offs are made.

It breaks when trade-offs are invisible.